Add initial documentation and example projects for ZML, covering how‑to guides, tutorials, and benchmark examples.

This commit is contained in:

parent

266da6d4be

commit

eded305649

77

docs/howtos/add_weights.md

Normal file

77

docs/howtos/add_weights.md

Normal file

@ -0,0 +1,77 @@

|

||||

|

||||

# Adding Weights Files

|

||||

|

||||

Our [first model](../tutorials/write_first_model.md) did not need any weights files.

|

||||

We just created weights and bias at runtime.

|

||||

|

||||

But real-world models typically need weights files, and maybe some other

|

||||

supporting files.

|

||||

|

||||

We recommend, for easy deployments, you upload those files. In many instances,

|

||||

you will use a site like [🤗 Hugging Face](https://huggingface.co).

|

||||

|

||||

We also recommend to add a `weights.bzl` file to your project root directory, so

|

||||

you don't "pollute" your build file with long URLs and SHAs:

|

||||

|

||||

```python

|

||||

load("@bazel_tools//tools/build_defs/repo:http.bzl", "http_file")

|

||||

|

||||

def _weights_impl(mctx):

|

||||

http_file(

|

||||

name = "com_github_zml_cdn_mnist",

|

||||

downloaded_file_path = "mnist.pt",

|

||||

sha256 = "d8a25252e28915e147720c19223721f0f53e3317493727ca754a2dd672450ba9",

|

||||

url = "https://github.com/ggerganov/ggml/raw/18703ad600cc68dbdb04d57434c876989a841d12/examples/mnist/models/mnist/mnist_model.state_dict",

|

||||

)

|

||||

|

||||

http_file(

|

||||

name = "com_github_zml_cdn_mnist_data",

|

||||

downloaded_file_path = "mnist.ylc",

|

||||

sha256 = "0fa7898d509279e482958e8ce81c8e77db3f2f8254e26661ceb7762c4d494ce7",

|

||||

url = "https://github.com/ggerganov/ggml/raw/18703ad600cc68dbdb04d57434c876989a841d12/examples/mnist/models/mnist/t10k-images.idx3-ubyte",

|

||||

)

|

||||

|

||||

return mctx.extension_metadata(

|

||||

reproducible = True,

|

||||

root_module_direct_deps = "all",

|

||||

root_module_direct_dev_deps = [],

|

||||

)

|

||||

|

||||

weights = module_extension(

|

||||

implementation = _weights_impl,

|

||||

)

|

||||

```

|

||||

|

||||

The above `weights.bzl` shows how we load files for MNIST:

|

||||

|

||||

- `mnist.pt` (model weights)

|

||||

- `mnist.ylc` (dataset for picking sample images)

|

||||

|

||||

Then, in your `BUILD.bazel`, you can refer to the files you defined above, in

|

||||

the following way:

|

||||

|

||||

```python

|

||||

zig_cc_binary(

|

||||

name = "mnist",

|

||||

args = [

|

||||

"$(location @com_github_zml_cdn_mnist//file)",

|

||||

"$(location @com_github_zml_cdn_mnist_data//file)",

|

||||

],

|

||||

data = [

|

||||

"@com_github_zml_cdn_mnist//file",

|

||||

"@com_github_zml_cdn_mnist_data//file",

|

||||

],

|

||||

main = "mnist.zig",

|

||||

deps = [

|

||||

"//async",

|

||||

"//zml",

|

||||

],

|

||||

)

|

||||

```

|

||||

|

||||

See how:

|

||||

|

||||

- we use `data = [ ... ]` to reference the files in `weights.bzl`

|

||||

- we use `args = [ ... ]` to pass the files as command-line arguments to the

|

||||

MNIST executable at runtime, automatically.

|

||||

|

||||

136

docs/howtos/deploy_on_server.md

Normal file

136

docs/howtos/deploy_on_server.md

Normal file

@ -0,0 +1,136 @@

|

||||

|

||||

# Deploying Models on a Server

|

||||

|

||||

To run models on remote GPU/TPU machines, it is inconvenient to have to check

|

||||

out your project’s repository and compile it on every target. Instead, you more

|

||||

likely want to cross-compile right from your development machine, **for every**

|

||||

supported target architecture and accelerator.

|

||||

|

||||

See [Getting Started with ZML](../tutorials/getting_started.md) if you need more

|

||||

information on how to compile a model.

|

||||

|

||||

**Here's a quick recap:**

|

||||

|

||||

You can compile models for accelerator runtimes by appending one or more of the

|

||||

following arguments to the command line when compiling / running a model:

|

||||

|

||||

- NVIDIA CUDA: `--@zml//runtimes:cuda=true`

|

||||

- AMD RoCM: `--@zml//runtimes:rocm=true`

|

||||

- Google TPU: `--@zml//runtimes:tpu=true`

|

||||

- **AVOID CPU:** `--@zml//runtimes:cpu=false`

|

||||

|

||||

So, to run the OpenLLama model from above **on your development machine**

|

||||

housing an NVIDIA GPU, run the following:

|

||||

|

||||

```

|

||||

cd examples

|

||||

bazel run -c opt //llama:OpenLLaMA-3B --@zml//runtimes:cuda=true

|

||||

```

|

||||

|

||||

|

||||

## Cross-Compiling and creating a TAR for your server

|

||||

|

||||

Currently, ZML lets you cross-compile to one of the following target

|

||||

architectures:

|

||||

|

||||

- Linux X86_64: `--platforms=@zml//platforms:linux_amd64`

|

||||

- Linux ARM64: `--platforms=@zml//platforms:linux_arm64`

|

||||

- MacOS ARM64: `--platforms=@zml//platforms:macos_arm64`

|

||||

|

||||

As an example, here is how you build above OpenLLama for CUDA on Linux X86_64:

|

||||

|

||||

```

|

||||

cd examples

|

||||

bazel build -c opt //llama:OpenLLaMA-3B \

|

||||

--@zml//runtimes:cuda=true \

|

||||

--@zml//runtimes:cpu=false \

|

||||

--platforms=@zml//platforms:linux_amd64

|

||||

```

|

||||

|

||||

### Creating the TAR

|

||||

|

||||

When cross-compiling, it is convenient to produce a compressed TAR file that

|

||||

you can copy to the target host, so you can unpack it there and run the model.

|

||||

|

||||

Let's use MNIST as example.

|

||||

|

||||

If not present already, add an "archive" target to the model's `BUILD.bazel`,

|

||||

like this:

|

||||

|

||||

```python

|

||||

load("@aspect_bazel_lib//lib:tar.bzl", "mtree_spec", "tar")

|

||||

|

||||

# Manifest, required for building the tar archive

|

||||

mtree_spec(

|

||||

name = "mtree",

|

||||

srcs = [":mnist"],

|

||||

)

|

||||

|

||||

# Create a tar archive from the above manifest

|

||||

tar(

|

||||

name = "archive",

|

||||

srcs = [":mnist"],

|

||||

args = [

|

||||

"--options",

|

||||

"zstd:compression-level=9",

|

||||

],

|

||||

compress = "zstd",

|

||||

mtree = ":mtree",

|

||||

)

|

||||

```

|

||||

|

||||

... and then build the TAR archive:

|

||||

|

||||

```

|

||||

# cd examples

|

||||

bazel build -c opt //mnist:archive \

|

||||

--@zml//runtimes:cuda=true \

|

||||

--@zml//runtimes:cpu=false \

|

||||

--platforms=@zml//platforms:linux_amd64

|

||||

```

|

||||

|

||||

Note the `//mnist:archive` notation.

|

||||

|

||||

The resulting tar file will be in `bazel-bin/mnist/archive.tar.zst`.

|

||||

|

||||

### Run it on the server

|

||||

|

||||

You can copy the TAR archive onto your Linux X86_64 NVIDIA GPU server, untar

|

||||

and run it:

|

||||

|

||||

```bash

|

||||

# on your machine

|

||||

scp bazel-bin/mnist/archive.tar.zst destination-server:

|

||||

ssh destination-server # to enter the server

|

||||

|

||||

# ... on the server

|

||||

tar xvf archive.tar.zst

|

||||

./mnist \

|

||||

'mnist.runfiles/_main~_repo_rules~com_github_ggerganov_ggml_mnist/file/mnist.pt' \

|

||||

'mnist.runfiles/_main~_repo_rules~com_github_ggerganov_ggml_mnist_data/file/mnist.ylc'

|

||||

```

|

||||

|

||||

The easiest way to figure out the commandline arguments of an example model is

|

||||

to consult the model's `BUILD.bazel` and check out its `args` section. It will

|

||||

reference e.g. weights files that are defined either in the same `BUILD.bazel`

|

||||

file or in a `weights.bzl` file.

|

||||

|

||||

You can also consult the console output when running your model locally:

|

||||

|

||||

```bash

|

||||

bazel run //mnist

|

||||

|

||||

INFO: Analyzed target //mnist:mnist (0 packages loaded, 0 targets configured).

|

||||

INFO: Found 1 target...

|

||||

Target //mnist:mnist up-to-date:

|

||||

bazel-bin/mnist/mnist

|

||||

INFO: Elapsed time: 0.302s, Critical Path: 0.00s

|

||||

INFO: 3 processes: 3 internal.

|

||||

INFO: Build completed successfully, 3 total actions

|

||||

INFO: Running command line: bazel-bin/mnist/mnist ../_main~_repo_rules~com_github_ggerganov_ggml_mnist/file/mnist.pt ../_main~_repo_rules~com_github_ggerganov_ggml_mnist_data/file/mnist.ylc

|

||||

# ...

|

||||

```

|

||||

|

||||

You see the command line right up there. On the server, you just need to replace

|

||||

`../` with the 'runfiles' directory of your TAR.

|

||||

|

||||

376

docs/howtos/dockerize_models.md

Normal file

376

docs/howtos/dockerize_models.md

Normal file

@ -0,0 +1,376 @@

|

||||

|

||||

# Containerize a Model

|

||||

|

||||

A convenient way of [deploying a model](../howtos/deploy_on_server.md) is packaging

|

||||

it up in a Docker container. Thanks to bazel, this is really easy to do. You

|

||||

just have to append a few lines to your model's `BUILD.bazel`. Here is how it's

|

||||

done.

|

||||

|

||||

**Note:** This walkthrough will work with your installed container runtime, no

|

||||

matter if it's **Docker or e.g. Podman.** Also, we'll create images in the

|

||||

[OCI](https://github.com/opencontainers/image-spec) open image format.

|

||||

|

||||

Let's try containerizing our [first model](../tutorials/write_first_model.md), as it

|

||||

doesn't need any additional weights files. We'll see [down below](#adding-weights-and-data)

|

||||

how to add those. We'll also see how to add GPU/TPU support for our container

|

||||

there.

|

||||

|

||||

Bazel creates images from `.TAR` archives.

|

||||

|

||||

The steps required for containerization are:

|

||||

|

||||

1. Let bazel create a MANIFEST for the tar file to come.

|

||||

2. Let bazel create a TAR archive of everything needed for the model to run.

|

||||

- see also: [Deploying Models on a Server](../howtos/deploy_on_server.md), where

|

||||

we prepare a TAR file, and copy it to and run it on a remote GPU server.

|

||||

3. Let bazel create a container image for Linux X86_64.

|

||||

4. Let bazel load the image _(OPTIONAL)_.

|

||||

5. Let bazel push the image straight to the Docker registry.

|

||||

6. Let bazel [add weights and data](#adding-weights-and-data), GPU/TPU support

|

||||

_(OPTIONAL)_.

|

||||

|

||||

**Note:** every TAR archive we create (one in this example) becomes its own

|

||||

layer in the container image.

|

||||

|

||||

## Dockerizing our first model

|

||||

|

||||

We need to add a few "imports" at the beginning of our `BUILD.bazel` so we can

|

||||

use their rules to define our 5 additional targets:

|

||||

|

||||

```python

|

||||

load("@aspect_bazel_lib//lib:tar.bzl", "mtree_spec", "tar")

|

||||

load("@aspect_bazel_lib//lib:transitions.bzl", "platform_transition_filegroup")

|

||||

load("@rules_oci//oci:defs.bzl", "oci_image", "oci_load", "oci_push")

|

||||

|

||||

zig_cc_binary(

|

||||

name = "simple_layer",

|

||||

main = "main.zig",

|

||||

deps = [

|

||||

"@zml//async",

|

||||

"@zml//zml",

|

||||

],

|

||||

)

|

||||

```

|

||||

|

||||

|

||||

### 1. The Manifest

|

||||

|

||||

To get started, let's make bazel generate a manifest that will be used when

|

||||

creating the TAR archive.

|

||||

|

||||

```python

|

||||

# Manifest created from the simple_layer binary and friends

|

||||

mtree_spec(

|

||||

name = "mtree",

|

||||

srcs = [":simple_layer"],

|

||||

)

|

||||

```

|

||||

|

||||

It is as easy as that: we define that we want everything needed for our binary

|

||||

to be included in the manifest.

|

||||

|

||||

|

||||

### 2. The TAR

|

||||

|

||||

Creating the TAR archive is equally easy; it's just a few more lines of bazel:

|

||||

|

||||

```python

|

||||

# Create a tar archive from the above manifest

|

||||

tar(

|

||||

name = "archive",

|

||||

srcs = [":simple_layer"],

|

||||

args = [

|

||||

"--options",

|

||||

"zstd:compression-level=9",

|

||||

],

|

||||

compress = "zstd",

|

||||

mtree = ":mtree",

|

||||

)

|

||||

```

|

||||

|

||||

Note that we specify high **zstd** compression, which serves two purposes:

|

||||

avoiding large TAR files, and also: creating TAR files that are quick to

|

||||

extract.

|

||||

|

||||

|

||||

### 3. The Image

|

||||

|

||||

Creating the actual image is a two-step process:

|

||||

|

||||

- First, we use a rule that creates an

|

||||

[OCI](https://github.com/opencontainers/image-spec) image (open image

|

||||

format). But we're not done yet.

|

||||

- Second, we force the actual OCI image to be built for `Linux X86_64` always,

|

||||

regardless of the host we're building the image **on**.

|

||||

|

||||

```python

|

||||

# The actual docker image, with entrypoint, created from tar archive

|

||||

oci_image(

|

||||

name = "image_",

|

||||

base = "@distroless_cc_debian12",

|

||||

entrypoint = ["./{}/simple_layer".format(package_name())],

|

||||

tars = [":archive"],

|

||||

)

|

||||

```

|

||||

|

||||

See how we use string interpolation to fill in the folder name for the

|

||||

container's entrypoint?

|

||||

|

||||

|

||||

Next, we use a transition rule to force the container to be built for

|

||||

Linux X86_64:

|

||||

|

||||

```python

|

||||

# We always want to create the image for Linux

|

||||

platform_transition_filegroup(

|

||||

name = "image",

|

||||

srcs = [":image_"],

|

||||

target_platform = "@zml//platforms:linux_amd64",

|

||||

)

|

||||

```

|

||||

|

||||

And that's almost it! You can already build the image:

|

||||

|

||||

```

|

||||

# cd examples

|

||||

bazel build -c opt //simple_layer:image

|

||||

|

||||

INFO: Analyzed target //simple_layer:image (1 packages loaded, 8 targets configured).

|

||||

INFO: Found 1 target...

|

||||

Target //simple_layer:image up-to-date:

|

||||

bazel-out/k8-dbg-ST-f832ad0148ae/bin/simple_layer/image_

|

||||

INFO: Elapsed time: 0.279s, Critical Path: 0.00s

|

||||

INFO: 1 process: 1 internal.

|

||||

INFO: Build completed successfully, 1 total action

|

||||

```

|

||||

|

||||

... and inspect `./bazel-out`. Bazel tells you the exact path to the `image_`.

|

||||

|

||||

|

||||

### 4. The Load

|

||||

|

||||

While inspecting the image is surely interesting, we usually want to load the

|

||||

image so we can run it.

|

||||

|

||||

There is a bazel rule for that: `oci_load`. When we append the following lines

|

||||

to `BUILD.bazel`:

|

||||

|

||||

```python

|

||||

# Load will immediately load the image (eg: docker load)

|

||||

oci_load(

|

||||

name = "load",

|

||||

image = ":image",

|

||||

repo_tags = [

|

||||

"distroless/simple_layer:latest",

|

||||

],

|

||||

)

|

||||

```

|

||||

... then we can load the image and run it with the following commands:

|

||||

|

||||

```

|

||||

bazel run -c opt //simple_layer:load

|

||||

docker run --rm distroless/simple_layer:latest

|

||||

```

|

||||

|

||||

|

||||

### 5. The Push

|

||||

|

||||

We just need to add one more target to the build file before we can push the

|

||||

image to a container registry:

|

||||

|

||||

```python

|

||||

# Bazel target for pushing the Linux image to the docker registry

|

||||

oci_push(

|

||||

name = "push",

|

||||

image = ":image",

|

||||

remote_tags = ["latest"],

|

||||

# override with -- --repository foo.bar/org/image

|

||||

repository = "index.docker.io/renerocksai/simple_layer",

|

||||

)

|

||||

```

|

||||

|

||||

This will push the `simple_layer` image with the tag `latest` (you can add more)

|

||||

to the docker registry:

|

||||

|

||||

```

|

||||

bazel run -c opt //simple_layer:push

|

||||

```

|

||||

|

||||

When dealing with maybe a public and a private container registry - or if you

|

||||

just want to try it out **right now**, you can always override the repository on

|

||||

the command line:

|

||||

|

||||

```

|

||||

bazel run -c opt //simple_layer:push -- --repository my.server.com/org/image

|

||||

```

|

||||

|

||||

|

||||

## Adding weights and data

|

||||

|

||||

Dockerizing a model that doesn't need any weights was easy. But what if you want

|

||||

to create a complete care-free package of a model plus all required weights and

|

||||

supporting files?

|

||||

|

||||

We'll use the [MNIST

|

||||

example](https://github.com/zml/zml/tree/master/examples/mnist) to illustrate

|

||||

how to build Docker images that also contain data files.

|

||||

|

||||

You can `bazel run -c opt //mnist:push -- --repository

|

||||

index.docker.io/my_org/zml_mnist` in the `./examples` folder if you want to try

|

||||

it out.

|

||||

|

||||

**Note: Please add one more of the following parameters to specify all the

|

||||

platforms your containerized model should support.**

|

||||

|

||||

- NVIDIA CUDA: `--@zml//runtimes:cuda=true`

|

||||

- AMD RoCM: `--@zml//runtimes:rocm=true`

|

||||

- Google TPU: `--@zml//runtimes:tpu=true`

|

||||

- **AVOID CPU:** `--@zml//runtimes:cpu=false`

|

||||

|

||||

**Example:**

|

||||

|

||||

```

|

||||

bazel run //mnist:push -c opt --@zml//runtimes:cuda=true -- --repository index.docker.io/my_org/zml_mnist

|

||||

```

|

||||

|

||||

|

||||

### Manifest and Archive

|

||||

|

||||

We only add one more target to the `BUILD.bazel` to construct the commandline

|

||||

for the `entrypoint` of the container. All other steps basically remain the

|

||||

same.

|

||||

|

||||

Let's start with creating the manifest and archive:

|

||||

|

||||

```python

|

||||

load("@aspect_bazel_lib//lib:expand_template.bzl", "expand_template")

|

||||

load("@aspect_bazel_lib//lib:tar.bzl", "mtree_spec", "tar")

|

||||

load("@aspect_bazel_lib//lib:transitions.bzl", "platform_transition_filegroup")

|

||||

load("@rules_oci//oci:defs.bzl", "oci_image", "oci_load", "oci_push")

|

||||

load("@zml//bazel:zig.bzl", "zig_cc_binary")

|

||||

|

||||

# The executable

|

||||

zig_cc_binary(

|

||||

name = "mnist",

|

||||

args = [

|

||||

"$(location @com_github_ggerganov_ggml_mnist//file)",

|

||||

"$(location @com_github_ggerganov_ggml_mnist_data//file)",

|

||||

],

|

||||

data = [

|

||||

"@com_github_ggerganov_ggml_mnist//file",

|

||||

"@com_github_ggerganov_ggml_mnist_data//file",

|

||||

],

|

||||

main = "mnist.zig",

|

||||

deps = [

|

||||

"@zml//async",

|

||||

"@zml//zml",

|

||||

],

|

||||

)

|

||||

|

||||

# Manifest created from the executable (incl. its data: weights and dataset)

|

||||

mtree_spec(

|

||||

name = "mtree",

|

||||

srcs = [":mnist"],

|

||||

)

|

||||

|

||||

# Create a tar archive from the above manifest

|

||||

tar(

|

||||

name = "archive",

|

||||

srcs = [":mnist"],

|

||||

args = [

|

||||

"--options",

|

||||

"zstd:compression-level=9",

|

||||

],

|

||||

compress = "zstd",

|

||||

mtree = ":mtree",

|

||||

)

|

||||

```

|

||||

|

||||

### Entrypoint

|

||||

|

||||

Our container entrypoint commandline is not just the name of the executable

|

||||

anymore, as we need to pass the weights file and the test dataset to MNIST. A

|

||||

simple string interpolation will not be enough.

|

||||

|

||||

For this reason, we use the `expand_template` rule, like this:

|

||||

|

||||

```python

|

||||

# A convenience template for creating the "command line" for the entrypoint

|

||||

expand_template(

|

||||

name = "entrypoint",

|

||||

data = [

|

||||

":mnist",

|

||||

"@com_github_ggerganov_ggml_mnist//file",

|

||||

"@com_github_ggerganov_ggml_mnist_data//file",

|

||||

],

|

||||

substitutions = {

|

||||

":model": "$(rlocationpath @com_github_ggerganov_ggml_mnist//file)",

|

||||

":data": "$(rlocationpath @com_github_ggerganov_ggml_mnist_data//file)",

|

||||

},

|

||||

template = [

|

||||

"./{}/mnist".format(package_name()),

|

||||

"./{}/mnist.runfiles/:model".format(package_name()),

|

||||

"./{}/mnist.runfiles/:data".format(package_name()),

|

||||

],

|

||||

)

|

||||

```

|

||||

|

||||

- `data`, which is identical to `data` in the `mnist` target used for running

|

||||

the model, tells bazel which files are needed.

|

||||

- in `substitutions` we define what `:model` and `:data` need to be replaced

|

||||

with

|

||||

- in `template`, we construct the actual entrypoint conmandline

|

||||

|

||||

|

||||

### Image, Push

|

||||

|

||||

From here on, everything is analog to the `simple_layer` example, with one

|

||||

exception: in the `image_` target, we don't fill in the `entrypoint` directly,

|

||||

but use the expanded template, which we conveniently named `entrypoint` above.

|

||||

|

||||

|

||||

```python

|

||||

|

||||

# The actual docker image, with entrypoint, created from tar archive

|

||||

oci_image(

|

||||

name = "image_",

|

||||

base = "@distroless_cc_debian12",

|

||||

# the entrypoint comes from the expand_template rule `entrypoint` above

|

||||

entrypoint = ":entrypoint",

|

||||

tars = [":archive"],

|

||||

)

|

||||

|

||||

# We always want to create the image for Linux

|

||||

platform_transition_filegroup(

|

||||

name = "image",

|

||||

srcs = [":image_"],

|

||||

target_platform = "@zml//platforms:linux_amd64",

|

||||

)

|

||||

|

||||

# Load will immediately load the image (eg: docker load)

|

||||

oci_load(

|

||||

name = "load",

|

||||

image = ":image",

|

||||

repo_tags = [

|

||||

"distroless/mnist:latest",

|

||||

],

|

||||

)

|

||||

|

||||

# Bazel target for pushing the Linux image to our docker registry

|

||||

oci_push(

|

||||

name = "push",

|

||||

image = ":image",

|

||||

remote_tags = ["latest"],

|

||||

# override with -- --repository foo.bar/org/image

|

||||

repository = "index.docker.io/steeve/mnist",

|

||||

)

|

||||

```

|

||||

|

||||

|

||||

And that's it! With one simple bazel command, you can push a neatly packaged

|

||||

MNIST model, including weights and dataset, to the docker registry:

|

||||

|

||||

```

|

||||

bazel run //mnist:push --@zml//runtimes:cuda=true -- --repository index.docker.io/my_org/zml_mnist

|

||||

```

|

||||

|

||||

293

docs/howtos/howto_torch2zml.md

Normal file

293

docs/howtos/howto_torch2zml.md

Normal file

@ -0,0 +1,293 @@

|

||||

|

||||

# How to port Pytorch models to ZML ?

|

||||

|

||||

|

||||

## Requirements

|

||||

|

||||

We assume you already have a working ZML project,

|

||||

and you can run it with a Bazel command like `bazel run //my_project:torch2zml`.

|

||||

You can refer to [write your first model](../tutorials/write_first_model.md) to do so.

|

||||

We also assume that you know enough Python to run the reference implementation.

|

||||

|

||||

## Overview

|

||||

|

||||

Porting Neural Network implementations can be tedious. Some small errors can

|

||||

degrade the output of the model, in subtle or not so subtle ways. To track down

|

||||

errors in a model with four thousand layers, we best be organized.

|

||||

|

||||

By the way if you are interested in a specific model, be careful that not all

|

||||

implementations of a model you can find on Github are equivalent. Sometimes

|

||||

people introduce subtle bugs when porting across Python libraries. Ideally use

|

||||

the author's implementation, or at least one you have tested yourself.

|

||||

|

||||

**The recommended process is as follows:**

|

||||

|

||||

1. run the reference implementation on a known input, and sample layer activations

|

||||

2. start a ZML project and load the sampled reference activations

|

||||

3. start porting layers one by one, and test individual layers

|

||||

4. end-to-end test the model

|

||||

|

||||

## Sampling reference activations

|

||||

|

||||

Pytorch exposes "forward hooks" that allow to inspect the input/output of each

|

||||

`torch.nn.Module`. That way it is possible to create a dictionary with each

|

||||

layer input/output, keyed by the name of the layer.

|

||||

|

||||

The main caveat is that if you have a functional implementation that doesn't

|

||||

use `torch.nn.Module`, this technique won't work.

|

||||

|

||||

It is the easiest to start from a "huggingface" snippet, or a python script

|

||||

that calls the model of your choice on an example input. eg:

|

||||

|

||||

|

||||

|

||||

```python

|

||||

import torch

|

||||

import transformers

|

||||

|

||||

model_path = "meta-llama/Meta-Llama-3-8B"

|

||||

|

||||

pipeline = transformers.pipeline(

|

||||

"text-generation",

|

||||

model=model_path,

|

||||

model_kwargs={"torch_dtype": torch.float16},

|

||||

# device="cuda",

|

||||

token=token,

|

||||

)

|

||||

|

||||

prompt = "Q: What is the largest animal?\nA:"

|

||||

output = pipeline(prompt)

|

||||

print(output)

|

||||

```

|

||||

|

||||

Then edit the script to import [zml_utils](https://github.com/zml/zml/blob/master/tools/zml_utils.py).

|

||||

|

||||

`zml_utils.py` is standalone and currently it's not distributed as a python

|

||||

package, so the simplest way to use it, is to copy it next to your python

|

||||

script. Then wrap the model/pipeline in a `zml_utils.ActivationCollector`. The

|

||||

collector wraps the given model, and returns the original results AND the

|

||||

activations in a dict of `torch.Tensor` when it's being called. After that, you

|

||||

can save those activations to a `.pt` file.

|

||||

|

||||

```python

|

||||

import torch

|

||||

import transformers

|

||||

import zml_utils

|

||||

|

||||

model_path = "meta-llama/Meta-Llama-3-8B"

|

||||

|

||||

pipeline = transformers.pipeline(

|

||||

"text-generation",

|

||||

model=model_path,

|

||||

model_kwargs={"torch_dtype": torch.float16},

|

||||

# device="cuda",

|

||||

)

|

||||

model, tokenizer = pipeline.model, pipeline.tokenizer

|

||||

|

||||

prompt = "Q: What is the largest animal?\nA:"

|

||||

# Wrap the pipeline, and extract activations.

|

||||

# Activations files can be huge for big models,

|

||||

# so let's stop collecting after 1000 layers.

|

||||

pipeline = zml_utils.ActivationCollector(pipeline, max_layers=1000, stop_after_first_step=True)

|

||||

output, activations = pipeline(prompt)

|

||||

print(output)

|

||||

|

||||

# Save activations to a file.

|

||||

filename = model_path.split("/")[-1] + ".activations.pt"

|

||||

torch.save(activations, filename)

|

||||

print(f"Saved {len(activations)} activations to {filename}")

|

||||

```

|

||||

|

||||

Run this script: `python activations.py`

|

||||

|

||||

If you're using HuggingFace, make note of the local path where the model is

|

||||

saved, it should be something like `~/.cache/huggingface/hub/...`. (and should

|

||||

appear on the console when running the script). We will need it in the next

|

||||

steps.

|

||||

|

||||

## Loading model and activations in ZML

|

||||

|

||||

Let's create a basic ZML program that loads the activations and the Pytorch

|

||||

model. Put the following in `my_project/torch2zml.zig`.

|

||||

|

||||

```zig

|

||||

const std = @import("std");

|

||||

const log = std.log;

|

||||

|

||||

const asynk = @import("async");

|

||||

const zml = @import("zml");

|

||||

|

||||

pub fn main() !void {

|

||||

var gpa = std.heap.GeneralPurposeAllocator(.{}){};

|

||||

defer _ = gpa.deinit();

|

||||

try asynk.AsyncThread.main(gpa.allocator(), asyncMain, .{});

|

||||

}

|

||||

|

||||

pub fn asyncMain() !void {

|

||||

var gpa = std.heap.GeneralPurposeAllocator(.{}){};

|

||||

defer _ = gpa.deinit();

|

||||

const allocator = gpa.allocator();

|

||||

|

||||

const args = try std.process.argsAlloc(allocator);

|

||||

defer std.process.argsFree(allocator, args);

|

||||

|

||||

const model_path, const activations_path = args[1..3].*;

|

||||

|

||||

const activations = try zml.aio.torch.open(allocator, activations_path);

|

||||

defer activations.deinit();

|

||||

log.info("Found {} activations in {s}", .{ activations.buffers.count(), activations_path });

|

||||

|

||||

const model_weights = try zml.aio.detectFormatAndOpen(allocator, model_path);

|

||||

defer model_weights.deinit();

|

||||

log.info("Found {} model layers in {s}", .{ model_weights.buffers.count(), activations_path });

|

||||

}

|

||||

```

|

||||

|

||||

And add a `zig_cc_binary` target in `my_project/BUILD.bazel`:

|

||||

|

||||

```python

|

||||

load("@zml//bazel:zig.bzl", "zig_cc_binary")

|

||||

|

||||

zig_cc_binary(

|

||||

name = "torch2zml",

|

||||

main = "torch2zml.zig",

|

||||

deps = [

|

||||

"@zml//async",

|

||||

"@zml//zml",

|

||||

],

|

||||

)

|

||||

```

|

||||

|

||||

Now check that the weights can be loaded correctly using the bazel CLI.

|

||||

|

||||

```bash

|

||||

bazel build //my_project:torch2zml

|

||||

./bazel-bin/my_project/torch2zml /path/to/my/model.safetensors.index.json ./my_project/Meta-Llama-3-8B.activations.pt

|

||||

|

||||

info: Found 1108 activations in /Users/guw/Documents/zml/models/torch2zml/Meta-Llama-3-8B.activations.pt

|

||||

debug(zml_io): Loading shard: model-00004-of-00004.safetensors

|

||||

debug(zml_io): Loading shard: model-00001-of-00004.safetensors

|

||||

debug(zml_io): Loading shard: model-00002-of-00004.safetensors

|

||||

debug(zml_io): Loading shard: model-00003-of-00004.safetensors

|

||||

info: Found 291 model layers in /Users/guw/Documents/zml/models/torch2zml/Meta-Llama-3-8B.activations.pt

|

||||

```

|

||||

|

||||

## Loading an individual layer

|

||||

|

||||

In the above Zig code, the `model_weights` struct is a wrapper around a flat

|

||||

dictionary, containing an entry for each tensor in the model (similar to a

|

||||

"state dict"). Manipulating a dictionary is generally not very convenient, so

|

||||

let's convert it to a Zig struct.

|

||||

|

||||

Declare the following layer at the bottom of your file:

|

||||

|

||||

```zig

|

||||

const Mlp = struct {

|

||||

up_proj: zml.nn.Linear,

|

||||

gate_proj: zml.nn.Linear,

|

||||

down_proj: zml.nn.Linear,

|

||||

};

|

||||

```

|

||||

|

||||

The `zml.nn.Linear` is the equivalent of `torch.nn.Linear` and is defined by

|

||||

its `weight` and optional `bias` tensors.

|

||||

|

||||

To create such a struct from our `model_weights` dictionary, we can use the

|

||||

`zml.aio.populateModelWithPrefix` helper:

|

||||

|

||||

```zig

|

||||

pub fn asyncMain() !void {

|

||||

...

|

||||

const mlp_shape = try zml.aio.populateModelWithPrefix(Mlp, allocator, model_weights, "model.layers.0.mlp");

|

||||

log.info("layer.0.mlp: {}", .{mlp_shape});

|

||||

}

|

||||

```

|

||||

|

||||

Build and run, using previous commands.

|

||||

|

||||

Typical errors are of the form _"Layer not found: ..."_. This is typically due

|

||||

to the naming of layers in Zig not matching the naming in the file.

|

||||

Double-check everything and don't hesitate to print more things, e.g. in the

|

||||

Python script. Alternatively, Huggingface's web-interface allows to peek into

|

||||

`.safetensor` files.

|

||||

|

||||

|

||||

## Testing an individual layer

|

||||

|

||||

Finally, we are going to write the actual math code for our `MLP` layer.

|

||||

|

||||

```zig

|

||||

const Mlp = struct {

|

||||

up_proj: zml.nn.Linear,

|

||||

gate_proj: zml.nn.Linear,

|

||||

down_proj: zml.nn.Linear,

|

||||

|

||||

pub fn forward(self: Mlp, x: Tensor) Tensor {

|

||||

const proj = zml.call(self.up_proj, .forward, .{x});

|

||||

var output = zml.call(self.gate_proj, .forward, .{x});

|

||||

output = output.silu().mul(proj);

|

||||

return zml.call(self.down_proj, .forward, .{output});

|

||||

}

|

||||

};

|

||||

```

|

||||

|

||||

Note that we use `zml.call` instead of directly calling

|

||||

`self.up_proj.forward(x)`. Calling `forward` directly results in the same

|

||||

computation happening at runtime; but going through `zml.call` allows ZML to

|

||||

generate an MLIR representation that is closer to the Zig code and therefore

|

||||

easier to read.

|

||||

|

||||

We can test the MLP layer with the `zml.testing.testLayer` utility:

|

||||

|

||||

```zig

|

||||

pub fn asyncMain() !void {

|

||||

...

|

||||

|

||||

var ctx = try zml.Context.init();

|

||||

defer ctx.deinit();

|

||||

const platform = ctx.autoPlatform();

|

||||

const mlp_weights = try zml.aio.loadModelBuffers(Mlp, mlp_shape, model_weights, allocator, platform);

|

||||

|

||||

zml.testing.testLayer(platform, activations, "model.layers.0.mlp", mlp_shape, mlp_weights, 1e-3);

|

||||

}

|

||||

```

|

||||

|

||||

During this phase, you have three kinds of errors that can appear:

|

||||

|

||||

* Zig compilation errors: we've all have been there, learning a new language

|

||||

can be tough. Normally, the compiler should help you figure out what's wrong.

|

||||

You can also check [ZML concepts](../learn/concepts.md) that explains types used

|

||||

by ZML.

|

||||

* Buffer not found errors: be careful that you need to use

|

||||

the naming scheme of the inference pipeline when loading the activations.

|

||||

Depending on how you write your code, you may have a different naming

|

||||

convention in the model file and in the activation file. This is because in

|

||||

Python, and in particular the `transformers` library, it's not uncommon to

|

||||

wrap the model in a `Pipeline` object before using it. So a given layer may

|

||||

be named `layer.0.mlp` in the model file, but its activations may be saved

|

||||

under `model.layer.0.mlp`.

|

||||

* MLIR compilation errors: typically this is caused by a mathematical

|

||||

error in the `forward` function. To help here, you can log the shapes of the

|

||||

input and intermediary values: `std.log.info("x: {}", .{x})`, and put similar

|

||||

print statements in the Python code. You can also consider splitting a big

|

||||

layer into smaller parts. Since our code only explicitly captures

|

||||

`torch.nn.Module` input/output, you may need to modify the Python script to

|

||||

add some extra tensors to the dictionary with example input/output of a

|

||||

specific function.

|

||||

|

||||

## General tips

|

||||

|

||||

* Porting models can be hard, especially if the original code is messy, has

|

||||

poor comments, behaves differently on different input shapes, or has unused

|

||||

code paths. Start by identifying parts of the Python code which are

|

||||

**unused**. It is common in research code that some code paths were written

|

||||

for one paper, but didn't get used in subsequent papers.

|

||||

|

||||

* ZML offers a few Pytorch specific helpers in `zml.torch`; those operators are

|

||||

offered to help you port models, but in general they may have weird APIs. If

|

||||

you're lucky and the code you are porting has comments indicating "tags", eg

|

||||

"C,W,H" of tensors, you can port this to actual tensor attributes using

|

||||

`x.withTags(.{.c, .w, .h})`, and use those tags (eg `.c`) to refer to axes

|

||||

instead of offsets. E.g. in Pytorch: `x.sum(0) # reduce over channel axis`

|

||||

becomes `x.sum(.c)`. More on this topic in

|

||||

["Working with tensors"](../tutorials/working_with_tensors.md).

|

||||

41

docs/howtos/huggingface_access_token.md

Normal file

41

docs/howtos/huggingface_access_token.md

Normal file

@ -0,0 +1,41 @@

|

||||

|

||||

# Huggingface Token Authentication

|

||||

|

||||

Some models have restrictions and may require some sort of approval or

|

||||

agreement process, which, by consequence, **requires token-authentication with

|

||||

Huggingface**.

|

||||

|

||||

Here is how you can generate a **"read-only public repositories"** access token

|

||||

to log into your account on Huggingface, directly from `bazel`, in order to

|

||||

download models.

|

||||

|

||||

* log in at [https://huggingface.co/settings/tokens](https://huggingface.co/settings/tokens).

|

||||

* click on "Create new token"

|

||||

* give the token a name, eg `zml_public_repos`

|

||||

* under _Repositories_, grant the following permission: "Read access to

|

||||

contents of all public gated repos you can access".

|

||||

* at the bottom, click on "Create token".

|

||||

* copy the token by clicking `Copy`. **You won't be able to see it again.**

|

||||

* the token looks something like `hf_abCdEfGhijKlM`.

|

||||

* store the token on your machine (replace the placeholder with your actual

|

||||

token):

|

||||

|

||||

```

|

||||

echo -n <hf_my_token> > `$HOME/.cache/huggingface/token`

|

||||

```

|

||||

|

||||

The `-n` is important in order to not append an "end of line" character at the

|

||||

end of the file that would corrupt the token.

|

||||

|

||||

Now you're ready to download a gated model like `Meta-Llama-3-8b`!

|

||||

|

||||

**Example:**

|

||||

|

||||

```

|

||||

# requires token in $HOME/.cache/huggingface/token

|

||||

cd examples

|

||||

bazel run -c opt //llama:Meta-Llama-3-8b

|

||||

bazel run -c opt //llama:Meta-Llama-3-8b -- --promt="Once upon a time,"

|

||||

```

|

||||

|

||||

|

||||

33

docs/huggingface-access-token.md

Normal file

33

docs/huggingface-access-token.md

Normal file

@ -0,0 +1,33 @@

|

||||

# Running Gated Huggingface Models with Token Authentication

|

||||

|

||||

Some models have restrictions and may require some sort of approval or agreement

|

||||

process, which, by consequence, **requires token-authentication with Huggingface**.

|

||||

|

||||

Here is how you can generate a **"read-only public repositories"** access token to log into your account on Huggingface, directly from `bazel`, in order to download models.

|

||||

|

||||

* log in at [https://huggingface.co/settings/tokens](https://huggingface.co/settings/tokens).

|

||||

* click on "Create new token"

|

||||

* give the token a name, eg `zml_public_repos`,

|

||||

* under _Repositories_, grant the following permission: "Read access to contents of all public gated repos you can access".

|

||||

* at the bottom click on "Create token".

|

||||

* copy the token by clicking `Copy`. **You won't be able to see it again.**

|

||||

* the token looks something like `hf_abCdEfGhijKlM`.

|

||||

* store the token on your machine (replace the placeholder with your actual token):

|

||||

|

||||

```

|

||||

echo -n <hf_my_token> > `$HOME/.cache/huggingface/token`

|

||||

```

|

||||

|

||||

The `-n` is important in order to not append an "end of line" character at the end of the file that would corrupt the token.

|

||||

|

||||

Now you're ready to download a gated model like `Meta-Llama-3-8b`!

|

||||

|

||||

**Example:**

|

||||

|

||||

```

|

||||

# requires token in $HOME/.cache/huggingface/token

|

||||

cd examples

|

||||

bazel run -c opt //llama:Meta-Llama-3-8b

|

||||

bazel run -c opt //llama:Meta-Llama-3-8b -- --promt="Once upon a time,"

|

||||

```

|

||||

|

||||

161

docs/learn/concepts.md

Normal file

161

docs/learn/concepts.md

Normal file

@ -0,0 +1,161 @@

|

||||

|

||||

# ZML Concepts

|

||||

|

||||

## Model lifecycle

|

||||

|

||||

ZML is an inference stack that helps running Machine Learning (ML) models, and

|

||||

particulary Neural Networks (NN).

|

||||

|

||||

The lifecycle of a model is implemented in the following steps:

|

||||

|

||||

1. Open the model file and read the shapes of the weights, but leave the

|

||||

weights on the disk.

|

||||

|

||||

2. Using the loaded shapes and optional metadata, instantiate a model struct

|

||||

with `Tensor`s, representing the shape and layout of each layer of the NN.

|

||||

|

||||

3. Compile the model struct and it's `forward` function into an accelerator

|

||||

specific executable. The `forward` function describes the mathematical

|

||||

operations corresponding to the model inference.

|

||||

|

||||

4. Load the model weights from disk, onto the accelerator memory.

|

||||

|

||||

5. Bind the model weights to the executable.

|

||||

|

||||

6. Load some user inputs, and copy them to the accelerator.

|

||||

|

||||

7. Call the executable on the user inputs.

|

||||

|

||||

8. Fetch the returned model output from accelerator into host memory, and

|

||||

finally present it to the user.

|

||||

|

||||

9. When all user inputs have been processed, free the executable resources and

|

||||

the associated weights.

|

||||

|

||||

|

||||

**Some details:**

|

||||

|

||||

Note that the compilation and weight loading steps are both bottlenecks to your

|

||||

model startup time, but they can be done in parallel. **ZML provides

|

||||

asynchronous primitives** to make that easy.

|

||||

|

||||

The **compilation can be cached** across runs, and if you're always using the

|

||||

same model architecture with the same shapes, it's possible to by-pass it

|

||||

entirely.

|

||||

|

||||

The accelerator is typically a GPU, but can be another chip, or even the CPU

|

||||

itself, churning vector instructions.

|

||||

|

||||

|

||||

## Tensor Bros.

|

||||

|

||||

In ZML, we leverage Zig's static type system to differentiate between a few

|

||||

concepts, hence we not only have a `Tensor` to work with, like other ML

|

||||

frameworks, but also `Buffer`, `HostBuffer`, and `Shape`.

|

||||

|

||||

Let's explain all that.

|

||||

|

||||

* `Shape`: _describes_ a multi-dimension array.

|

||||

- `Shape.init(.{16}, .f32)` represents a vector of 16 floats of 32 bits

|

||||

precision.

|

||||

- `Shape.init(.{512, 1024}, .f16)` represents a matrix of `512*1024` floats

|

||||

of 16 bits precision, i.e. a `[512][1024]f16` array.

|

||||

|

||||

A `Shape` is only **metadata**, it doesn't point to or own any memory. The

|

||||

`Shape` struct can also represent a regular number, aka a scalar:

|

||||

`Shape.init(.{}, .i32)` represents a 32-bit signed integer.

|

||||

|

||||

* `HostBuffer`: _is_ a multi-dimensional array, whose memory is allocated **on

|

||||

the CPU**.

|

||||

- points to the slice of memory containing the array

|

||||

- typically owns the underlying memory - but has a flag to remember when it

|

||||

doesn't.

|

||||

|

||||

* `Buffer`: _is_ a multi-dimension array, whose memory is allocated **on an

|

||||

accelerator**.

|

||||

- contains a handle that the ZML runtime can use to convert it into a

|

||||

physical address, but there is no guarantee this address is visible from

|

||||

the CPU.

|

||||

- can be created by loading weights from disk directly to the device via

|

||||

`zml.aio.loadBuffers`

|

||||

- can be created by calling `HostBuffer.toDevice(accelerator)`.

|

||||

|

||||

* `Tensor`: is a mathematical object representing an intermediary result of a

|

||||

computation.

|

||||

- is basically a `Shape` with an attached MLIR value representing the

|

||||

mathematical operation that produced this `Tensor`.

|

||||

|

||||

|

||||

## The model struct

|

||||

|

||||

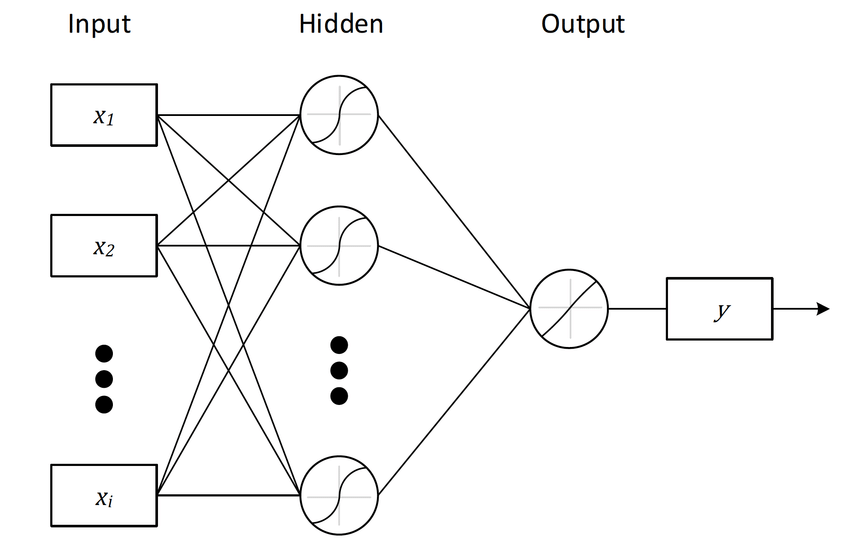

The model struct is the Zig code that describes your Neural Network (NN).

|

||||

Let's look a the following model architecture:

|

||||

|

||||

|

||||

|

||||

This is how we can describe it in a Zig struct:

|

||||

|

||||

```zig

|

||||

const Model = struct {

|

||||

input_layer: zml.Tensor,

|

||||

output_layer: zml.Tensor,

|

||||

|

||||

pub fn forward(self: Model, input: zml.Tensor) zml.Tensor {

|

||||

const hidden = self.input_layer.matmul(input);

|

||||

const output = self.output_layer.matmul(hidden);

|

||||

return output;

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

NNs are generally seen as a composition of smaller NNs, which are split into

|

||||

layers. ZML makes it easy to mirror this structure in your code.

|

||||

|

||||

```zig

|

||||

const Model = struct {

|

||||

input_layer: MyOtherLayer,

|

||||

output_layer: MyLastLayer,

|

||||

|

||||

pub fn forward(self: Model, input: zml.Tensor) zml.Tensor {

|

||||

const hidden = self.input_layer.forward(input);

|

||||

const output = self.output_layer.forward(hidden);

|

||||

return output;

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

`zml.nn` module provides a number of well-known layers to more easily bootstrap

|

||||

models.

|

||||

|

||||

Since the `Model` struct contains `Tensor`s, it is only ever useful during the

|

||||

compilation stage, but not during inference. If we want to represent the model

|

||||

with actual `Buffer`s, we can use the `zml.Bufferize(Model)`, which is a mirror

|

||||

struct of `Model` but with a `Buffer` replacing every `Tensor`.

|

||||

|

||||

## Strong type checking

|

||||

|

||||

Let's look at the model life cycle again, but this time annotated with the

|

||||

corresponding types.

|

||||

|

||||

1. Open the model file and read the shapes of the weights -> `zml.HostBuffer`

|

||||

(using memory mapping, no actual copies happen yet)

|

||||

|

||||

2. Instantiate a model struct -> `Model` struct (with `zml.Tensor` inside)

|

||||

|

||||

3. Compile the model struct and its `forward` function into an executable.

|

||||

`foward` is a `Tensor -> Tensor` function, executable is a

|

||||

`zml.Exe(Model.forward)`

|

||||

|

||||

4. Load the model weights from disk, onto accelerator memory ->

|

||||

`zml.Bufferized(Model)` struct (with `zml.Buffer` inside)

|

||||

|

||||

5. Bind the model weights to the executable `zml.ExeWithWeight(Model.forward)`

|

||||

|

||||

6. Load some user inputs (custom struct), encode them into arrays of numbers

|

||||

(`zml.HostBuffer`), and copy them to the accelerator (`zml.Buffer`).

|

||||

|

||||

7. Call the executable on the user inputs. `module.call` accepts `zml.Buffer`

|

||||

arguments and returns `zml.Buffer`

|

||||

|

||||

8. Return the model output (`zml.Buffer`) to the host (`zml.HostBuffer`),

|

||||

decode it (custom struct) and finally return to the user.

|

||||

36

docs/misc/style_guide.md

Normal file

36

docs/misc/style_guide.md

Normal file

@ -0,0 +1,36 @@

|

||||

|

||||

# ZML Style Guide

|

||||

|

||||

We prefer to keep it simple and adhere to the [Zig Style Guide](https://ziglang.org/documentation/0.13.0/#Style-Guide).

|

||||

|

||||

We use ZLS to auto-format code.

|

||||

|

||||

In addition, we try to adhere to the following house-rules:

|

||||

|

||||

### We favor:

|

||||

|

||||

```zig

|

||||

const x: Foo = .{ .bar = 1 }

|

||||

// over: const x = Foo{ .bar = 1}

|

||||

|

||||

pub fn method(self: Foo) void

|

||||

// over: pub fn method(self: Self) void

|

||||

|

||||

const foo = import("foo.zig"); foo.bar()

|

||||

// over: const bar = import("foo.zig").bar;

|

||||

// bar();

|

||||

|

||||

const Foo = import("foo.zig").Foo

|

||||

// over: const Foo = import("Foo.zig")

|

||||

//

|

||||

// Importing types directly instead of using

|

||||

// a namespace should be reserved for very

|

||||

// frequent types.

|

||||

|

||||

|

||||

/// Foo does X and returns Y

|

||||

pub fn foo() usize {

|

||||

// Descriptive doc comments over imperative ones

|

||||

```

|

||||

|

||||

As with the Zig Style Guide: use common sense 😊.

|

||||

145

docs/tutorials/getting_started.md

Normal file

145

docs/tutorials/getting_started.md

Normal file

@ -0,0 +1,145 @@

|

||||

|

||||

# Getting Started with ZML

|

||||

|

||||

In this tutorial, we will install `ZML` and run a few models locally.

|

||||

|

||||

## Prerequisites

|

||||

|

||||

First, let's checkout the ZML codebase. In a terminal, run:

|

||||

|

||||

```

|

||||

git clone https://github.com/zml/zml.git

|

||||

cd zml/

|

||||

```

|

||||

|

||||

We use `bazel` to build ZML and its dependencies. We recommend to download it

|

||||

through `bazelisk`, a version manager for `bazel`.

|

||||

|

||||

|

||||

### Install Bazel:

|

||||

|

||||

**macOs:**

|

||||

|

||||

```

|

||||

brew install bazelisk

|

||||

```

|

||||

|

||||

**Linux:**

|

||||

|

||||

```

|

||||

curl -L -o /usr/local/bin/bazel 'https://github.com/bazelbuild/bazelisk/releases/download/v1.20.0/bazelisk-linux-amd64'

|

||||

chmod +x /usr/local/bin/bazel

|

||||

```

|

||||

|

||||

|

||||

|

||||

## Run a pre-packaged model

|

||||

|

||||

ZML comes with a variety of model examples. See also our reference implementations in the [examples](https://github.com/zml/zml/tree/master/examples/) folder.

|

||||

|

||||

### MNIST

|

||||

|

||||

The [classic](https://en.wikipedia.org/wiki/MNIST_database) handwritten digits

|

||||

recognition task. The model is tasked to recognize a handwritten digit, which

|

||||

has been converted to a 28x28 pixel monochrome image. `Bazel` will download a

|

||||

pre-trained model, and the test dataset. The program will load the model,

|

||||

compile it, and classify a randomly picked example from the test dataset.

|

||||

|

||||

|

||||

On the command line:

|

||||

|

||||

```

|

||||

cd examples

|

||||

bazel run -c opt //mnist

|

||||

```

|

||||

|

||||

### Llama

|

||||

|

||||

Llama is a family of "Large Language Models", trained to generate text, based

|

||||

on the beginning of a sentence/book/article. This "beginning" is generally

|

||||

referred to as the "prompt".

|

||||

|

||||

#### TinyLlama, Stories 15M

|

||||

|

||||

To start, you can use a small model trained specifically on children's history

|

||||

books. This model has been trained by [Andrej Karpathy](https://x.com/karpathy);

|

||||

you can read more about it on his

|

||||

[Github](https://github.com/karpathy/llama2.c).

|

||||

|

||||

```

|

||||

cd examples

|

||||

bazel run -c opt //llama:TinyLlama-Stories-15M

|

||||

bazel run -c opt //llama:TinyLlama-Stories-15M -- --prompt="Once upon a time, there was a cute little dragon"

|

||||

```

|

||||

|

||||

#### OpenLLama 3B

|

||||

|

||||

```

|

||||

cd examples

|

||||

bazel run -c opt //llama:OpenLLaMA-3B

|

||||

bazel run -c opt //llama:OpenLLaMA-3B -- --prompt="Once upon a time,"

|

||||

```

|

||||

|

||||

#### Meta Llama 3 8B

|

||||

|

||||

This model has restrictions, see

|

||||

[here](https://huggingface.co/meta-llama/Meta-Llama-3-8B): it **requires

|

||||

approval from Meta on Huggingface**, which can take a few hours to get granted.

|

||||

|

||||

While waiting for approval, you can already

|

||||

[generate your Huggingface access token](../howtos/huggingface_access_token.md).

|

||||

|

||||

Once you've been granted access, you're ready to download a gated model like

|

||||

`Meta-Llama-3-8b`!

|

||||

|

||||

```

|

||||

# requires token in $HOME/.cache/huggingface/token

|

||||

cd examples

|

||||

bazel run -c opt //llama:Meta-Llama-3-8b

|

||||

bazel run -c opt //llama:Meta-Llama-3-8b -- --promt="Once upon a time,"

|

||||

```

|

||||

|

||||

|

||||

## Run Tests

|

||||

|

||||

```

|

||||

bazel test //zml:test

|

||||

```

|

||||

|

||||

## Running Models on GPU / TPU

|

||||

|

||||

You can compile models for accelerator runtimes by appending one or more of the

|

||||

following arguments to the command line when compiling or running a model:

|

||||

|

||||

- NVIDIA CUDA: `--@zml//runtimes:cuda=true`

|

||||

- AMD RoCM: `--@zml//runtimes:rocm=true`

|

||||

- Google TPU: `--@zml//runtimes:tpu=true`

|

||||

- **AVOID CPU:** `--@zml//runtimes:cpu=false`

|

||||

|

||||

The latter, avoiding compilation for CPU, cuts down compilation time.

|

||||

|

||||

|

||||

So, to run the OpenLLama model from above on your host sporting an NVIDIA GPU,

|

||||

run the following:

|

||||

|

||||

```

|

||||

cd examples

|

||||

bazel run -c opt //llama:OpenLLaMA-3B \

|

||||

--@zml//runtimes:cuda=true \

|

||||

-- --prompt="Once upon a time,"

|

||||

```

|

||||

|

||||

|

||||

## Where to go next:

|

||||

|

||||

In [Deploying Models on a Server](../howtos/deploy_on_server.md), we show how you can

|

||||

cross-compile and package for a specific architecture, then deploy and run your

|

||||

model. Alternatively, you can also [dockerize](../howtos/dockerize_models.md) your

|

||||

model.

|

||||

|

||||

You might also want to check out the

|

||||

[examples](https://github.com/zml/zml/tree/master/examples), read through the

|

||||

[documentation](../README.md), start

|

||||

[writing your first model](../tutorials/write_first_model.md), or read about more

|

||||

high-level [ZML concepts](../learn/concepts.md).

|

||||

|

||||

7

docs/tutorials/working_with_tensors.md

Normal file

7

docs/tutorials/working_with_tensors.md

Normal file

@ -0,0 +1,7 @@

|

||||

|

||||

# Simplifying Dimension Handling with Tagged Tensors

|

||||

|

||||

### Coming Soon...

|

||||

|

||||

See [ZML Concepts](../learn/concepts.md) for an introduction to Tensors and Shapes.

|

||||

|

||||

521

docs/tutorials/write_first_model.md

Normal file

521

docs/tutorials/write_first_model.md

Normal file

@ -0,0 +1,521 @@

|

||||

|

||||

# Writing your first model

|

||||

|

||||

**In this short guide, we will do the following:**

|

||||

|

||||

- clone ZML to work directly within the prepared example folder

|

||||

- add Zig code to implement our model

|

||||

- add some Bazel to integrate our code with ZML

|

||||

- no weights files or anything external is required for this example

|

||||

|

||||

The reason we're doing our excercise in the `examples` folder is because it's

|

||||

especially prepared for new ZML projects. It contains everything needed for ZML

|

||||

development. From `bazel` configs to `vscode` settings, and `neovim` LSP

|

||||

support. The `examples` folder serves as a cookiecutter ZML project example,

|

||||

with just a few example models added already.

|

||||

|

||||

**Note:** _The `examples` folder is self-contained. You **can** make a copy of

|

||||

it to a location outside of the ZML repository. Simply remove all examples you

|

||||

don't need and use it as a template for your own projects._

|

||||

|

||||

So, let's get started, shall we?

|

||||

|

||||

|

||||

|

||||

**If you haven't done so already, please [install bazel](../tutorials/getting_started.md)**.

|

||||

|

||||

|

||||

|

||||

Check out the ZML repository. In the `examples` directory, create a new folder

|

||||

for your project. Let's call it `simple_layer`.

|

||||

|

||||

```

|

||||

git clone https://github.com/zml/zml.git

|

||||

cd zml/examples

|

||||

mkdir -p simple_layer

|

||||

```

|

||||

|

||||

... and add a file `main.zig` to it, along with a bazel build file:

|

||||

|

||||

```

|

||||

touch simple_layer/main.zig

|

||||

touch simple_layer/BUILD.bazel

|

||||

```

|

||||

|

||||

By the way, you can access the complete source code of this walkthrough here:

|

||||

|

||||

- [main.zig](https://github.com/zml/zml/tree/master/examples/simple_layer/main.zig)

|

||||

- [BUILD.bazel](https://github.com/zml/zml/tree/master/examples/simple_layer/BUILD.bazel)

|

||||

|

||||

|

||||

|

||||

## The high-level Overview

|

||||

|

||||

Before firing up our editor, let's quickly talk about a few basic ZML

|

||||

fundamentals.

|

||||

|

||||

In ZML, we describe a _Module_, which represents our AI model, as a Zig

|

||||

`struct`. That struct can contain Tensor fields that are used for computation,

|

||||

e.g. weights and biases. In the _forward_ function of a Module, we describe the

|

||||

computation by calling tensor operations like _mul_, _add_, _dotGeneral_,

|

||||

_conv2D_, etc., or even nested Modules.

|

||||

|

||||

ZML creates an MLIR representation of the computation when we compile the

|

||||

Module. For compilation, only the _Shapes_ of all tensors must be known. No

|

||||

actual tensor data is needed at this step. This is important for large models:

|

||||

we can compile them while the actual weight data is being fetched from disk.

|

||||

|

||||

To accomplish this, ZML uses a _BufferStore_. The _BufferStore_ knows how to

|

||||

only load shapes and when to load actual tensor data. In our example, we will

|

||||

fake the _BufferStore_ a bit: we won't load from disk; we'll use float arrays

|

||||

instead.

|

||||

|

||||

After compilation is done (and the _BufferStore_ has finished loading weights),

|

||||

we can send the weights from the _BufferStore_ to our computation device. That

|

||||

produces an _executable_ module which we can call with different _inputs_.

|

||||

|

||||

In our example, we then copy the result from the computation device to CPU

|

||||

memory and print it.

|

||||

|

||||